Closing the AI Loop: Using Engagement Signals to Improve Content Over Time

Apr 28, 2026 • ArchyPress

The most valuable AI content products aren't the ones with the most sophisticated models. They're the ones where every generation cycle learns from what the last cycle proved.

One-off AI generation saves time. A feedback loop that closes the gap between what AI generates and what your audience actually responds to — that's where sustained content improvement lives.

At ArchySocial, the generate–publish–measure–refine cycle is central to how we think about content production. As part of the Archy AI Platform and Microsoft for Startups Hub, we're committed to building measurable systems — not black boxes where content goes in, posts come out, and nothing improves.

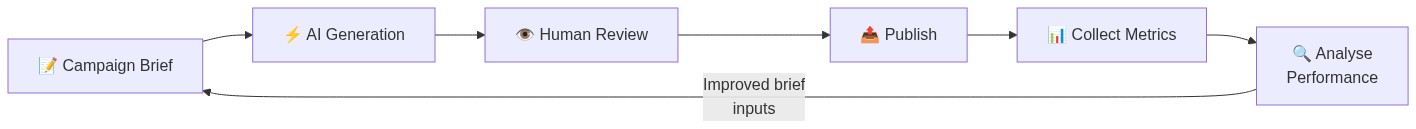

The Feedback Loop in Full

This is the loop every serious content operation needs — and the one most AI tools don't complete. Generation without measurement is just fast guessing.

1) Metrics Must Be Decision-Useful

Every social network provides impressions, likes, and shares. The problem isn't collecting numbers — it's making them actionable.

ArchySocial focuses on metrics that inform what to change in the next brief:

Topic Sustainability

Which topics sustain audience engagement across 3+ posts? Not one-hit spikes.

Format Effectiveness

Do narrative captions outperform punchy one-liners on LinkedIn? Do question hooks outperform statement hooks on X?

Time Window Quality

When do your specific audiences engage most? Not generic best-practice windows.

Network Differentiation

Does the same message perform differently across networks? Which channel is your strongest?

2) Post-Level Context Is Where Optimisation Happens

Campaign-level aggregates hide the patterns that matter. A 15% engagement uplift campaign-wide tells you nothing about which posts drove it and why.

Consider a real example: a campaign with 8 posts across LinkedIn and X. Campaign-level data says engagement is up 15%. Post-level data reveals:

Post #3 on X: 14.8% engagement rate — the hook was 'Stop wasting time on scheduling tools'

Post #3 on LinkedIn: 5.6% engagement rate — same point, buried in paragraph 2

When the hook moved to line 1 on LinkedIn next campaign: engagement jumped to 12%

Network-specific hook positioning is now a systematic brief instruction

That's the difference between aggregate reporting and actionable insight. Post-level tracking with network context reveals repeatable, transferable patterns.

3) Daily Aggregation Separates Signal from Noise

Social media data is inherently noisy: viral spikes, trending topic contamination, audience fluctuations. Looking at raw post numbers in isolation misleads strategy.

Daily aggregation into rolling trends is what allows meaningful questions:

Is baseline engagement improving week-over-week, or just spiking on outliers?

Did the Q2 campaign shift the average, or was it noise?

Are gains coming from one network or consistently across channels?

Is the Monday 9 AM time slot still outperforming Thursday 5 PM?

4) The Loop Must Feed Generation Inputs

This is where most AI tools fail completely: metrics are shown, but the next generation cycle ignores everything that was learned.

At ArchySocial, analytics exist to change what you put in the next brief:

Narrative Depth Signal

Your audience responded best to posts with 2–3 paragraphs of context. → Next brief: 'Provide narrative depth, not one-liners.'

Hook Format Signal

Posts that started with a question outperformed statements 2:1. → Next brief: 'Open with a question hook.'

Timing Pattern Signal

X peaks 9–11 AM EST; LinkedIn peaks noon–2 PM EST. → Next schedule: account for timezone patterns.

Topic Performance Signal

AI tooling posts got 3× engagement vs. generic productivity tips. → Next brief: 'Stay on AI angle, not general productivity.'

The Compound Effect

Each generation cycle that incorporates the previous cycle's performance data produces better content than the one before it. Not because the AI model improved — but because the inputs to the model improved.

That's the compound effect of a feedback loop. Month one, you're generating decent content. Month three, you're generating content that's structurally optimised for your specific audience, on your specific networks, at your specific times. No generic best-practice guessing.

The strongest AI content products are not the ones with higher token limits. They're the ones where every cycle gets smarter from measured outcomes.

See the loop in action

Full AI generation, per-network optimisation, analytics included. Free until June 1, 2026.