When Your AI Co-pilot Breaks the Build: Guardrails for Automated Code Fixes

May 03, 2026 • ArchyPress

Your CI pipeline has 12 type errors. An AI co-pilot swoops in with a fix. The fix introduces syntax errors worse than what it was trying to fix. This is a story about why automated code changes need guardrails — and what those guardrails should look like.

The Setup: AI Sees Red, AI Tries to Help

We had 12 TypeScript TS2540 errors in our CI pipeline — all caused by assigning to process.env.NODE_ENV in test files under strict mode (if you're curious about the root cause, check out our companion post on TypeScript strict mode and readonly process.env). The errors were clear, consistent, and mechanical. A perfect candidate for automated fixing, right?

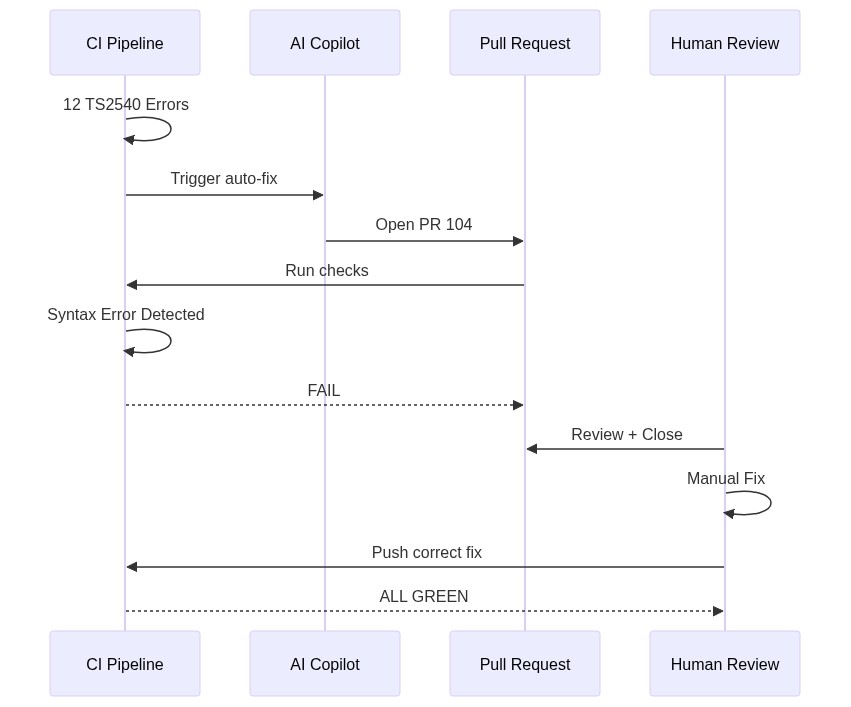

GitHub Copilot's automated fix feature created PR #104. It identified the right files and attempted the right fix pattern. So far, so good. But then something went sideways.

What the AI Actually Did

The PR contained changes that:

Removed closing braces from function bodies, leaving syntax incomplete

Deleted the final newline at EOF (a linting violation in most configs)

Applied partial fixes that addressed the type error but broke the surrounding code structure

In other words, the AI understood what needed to change (the process.env assignments) but not how the surrounding code depended on the exact structure it was modifying. It was like a surgeon who finds the tumor but accidentally nicks an artery on the way out.

Why CI Caught It (and Why That Matters)

Here's where the story gets interesting. CI ran on PR #104. It ran typecheck, linting, and tests — the same pipeline that caught the original 12 errors. And it correctly flagged the AI's fix as broken. The PR showed a bright red "Checks Failed" badge.

This is exactly what's supposed to happen. CI is not just there to validate your code — it's there to validate all code, regardless of authorship. Whether a change comes from a junior developer, a senior architect, or an AI co-pilot, it goes through the same gates. No exceptions.

The fact that an AI opened the PR didn't give it a pass on any check. The same standards applied. And the code didn't meet them. The PR was reviewed by a human, found wanting, and closed. The correct fix was applied manually — a one-line typed alias pattern that the AI had roughly identified but couldn't execute cleanly.

The Trust Calibration Problem

AI coding assistants are powerful. They can read error messages, identify patterns, suggest fixes at remarkable speed. But there's a calibration problem: they operate with high confidence and variable accuracy. When the fix is simple (rename a variable, add an import), AI nails it. When the fix requires understanding the structural context of a code block — where braces close, what indentation means semantically, how a change in one line affects the validity of the next ten — accuracy drops.

This is not a criticism of AI tools. It's an observation that shapes how we should integrate them into our workflows:

Building Guardrails That Actually Work

Based on this experience, here's the verification checklist we now run on any AI-generated code change before it can be merged:

Typecheck must pass: Run tsc --noEmit on the full project, not just the changed files. AI fixes can introduce new type errors in unexpected places.

Linting must pass: AI often subtly violates formatting rules (missing newlines, incorrect indentation) that pass type checking but fail lint.

Test suite must pass: Not just the tests related to the fix, but the entire suite. Structural changes can break test helpers and shared utilities.

Syntax validation: Explicitly check for unmatched braces, incomplete expressions, and missing statement terminators. These are exactly the errors AI tends to introduce.

Diff review by human: Even if all automated checks pass, a human should review the diff. AI changes can be semantically correct but stylistically wrong, or correct for the immediate file but wrong for the project's patterns.

The Broader Pattern: AI as Collaborator, Not Author

The mental model that works best is treating AI code contributions exactly like you'd treat contributions from a new team member who's brilliant but hasn't read all your docs yet. They'll get the broad strokes right. They'll occasionally miss context. They need code review.

The difference is speed. A human contributor who introduces syntax errors will catch them in local development. An AI contributor that operates at CI speed can push broken code before any human sees it. That's why automated guardrails are non-negotiable:

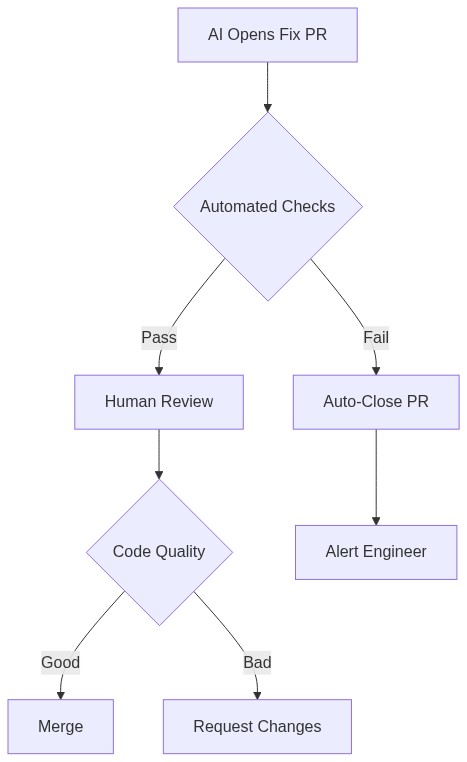

Gate all AI changes behind CI

Never auto-merge AI-generated PRs. All PRs from bots must pass the full CI pipeline AND receive human approval. Your merge protection rules are your last line of defense.

Layer your verification

Pair typecheck + lint + test. Each catches different failure modes. An AI fix that passes typecheck can still fail lint. One that passes both can still break tests.

Inspect failure patterns

When AI fixes fail, don't just close the PR. Look at WHAT it got wrong. The pattern of failure tells you where your automation has gaps that a human needs to cover.

Set up auto-close policies

Consider auto-closing PRs that fail CI within a time window. If the AI's fix doesn't pass checks on the first attempt, a human can do it faster than debugging the AI's approach.

What We Changed After This

This incident prompted us to build a CI hooks extension into our development workflow system (covered in detail in our third post on self-healing CI hooks). The extension runs typecheck, linting, and tests locally before any push, catches common error patterns like TS2540 automatically, and verifies the fix before it ever reaches CI.

The goal isn't to prevent AI from contributing. It's to make the contribution pipeline robust enough that it doesn't matter who (or what) authored the code. Quality gates apply to everyone.

Integrating AI into your CI pipeline?

We write about AI engineering workflows, CI/CD automation, and the practical realities of building with AI tools.